照明環境の推定

Difference Sphere :An approach to Near Light Source Estimation

We present a novel approach for estimating lighting sources from a single image of a scene that is illuminated by near point light sources, directional light sources and ambient light. We propose to employ a pair of reference spheres as light probes and introduce the difference sphere that we acquire by differencing the intensities of two image regions of the reference spheres. Since the effect by directional light sources and ambient light is eliminated by differencing, the key advantage of considering the difference sphere is that it enables us to estimate near point light sources including their radiance, which has been difficult to achieve in previous efforts where only distant directional light sources were assumed.

We also show that analysis of gray level contours on spherical surfaces facilitates separate identification of multiple combined light sources and is well suited to the difference sphere. Once we estimate point light sources with the difference sphere, we update the input image by eliminating their influence and then estimate other remaining light sources, that is, directional light sources and ambient light. We demonstrate the effectiveness of the entire algorithm with experimental results.

Estimation of Lighting Environment

Direct method

estimates a lighting distribution with images of a lighting environment, which are captured by an omnidirectional camera, a mirror sphere, etc.

It has difficulties of estimating a lighting environment of an indoor scene, which requires a near light source that has attenuation and effects depending on its position.

Indirect method

estimates a lighting distribution by analyzing images of reference objects under a lighting environment.

We present a novel method for estimating near light sources, directional light sources and ambient light with reference spheres.

Single Sphere

S-surface

S-surface

illuminated by a single light source

M-surface

illuminated by multiple light sources

By analyzing an S-surface, we can estimate the parameters of a light source that illuminates the surface.

Difference Sphere

Eliminating all the effects of directional light sources and ambient light, we can estimate the parameter of a point light source that illuminates an S-surface.

Eliminating all the effects of directional light sources and ambient light, we can estimate the parameter of a point light source that illuminates an S-surface.

Estimation Alogorithm

Twofold algorithm

1. Difference sphere

Estimate point light sources

Eliminating the effects from an input image, we generate a new input image.

2. Single sphere

Estimate directional light sources and ambient light.

In each sub-step,

- Convert image regions to contour representation,

- Estimate light source candidates, and

- Verify the candidates.

We can identify an M-surface by analyzing the residual that is generated by eliminating the effects of the light source candidate.

Continuing the procedure iteratively with the updated image, we can find correct estimation paths.

Our Key Ideas

Difference sphere

a virtual sphere generated by differencing two image regions of reference spheres We eliminate all the effect

We eliminate all the effects of directional light source and ambient light

Makes the problem simpler:

Image analysis with contour representation

3D geometric characteristics of shading in 2D image

It reflects characteristics of the light sources.

Intensity + 3D geometry

Model Definitions

Reference spheres

Reference spheres

known shape, BRDF (Lambertian model),ignore mutual reflection

Camera

accurately calibrated

Lighting environment

multiple light sources

unknown number

unknown type

point light source, directional light source, and ambient light

S-Surface Analysis

Feature 1

A contour line on an S-surface forms an arc on a 3D plane (Feature Plane).

Feature 2

The normal of the plane denotes the direction of the light source that illuminates the surface.

Feature 3

The intensity ratio in an S-surface characterizes the light source. Single sphere with a directional light source

⇒ We can obtain Ld and then La

Difference sphere with a point light source

⇒We can obtain DP and then Lp.

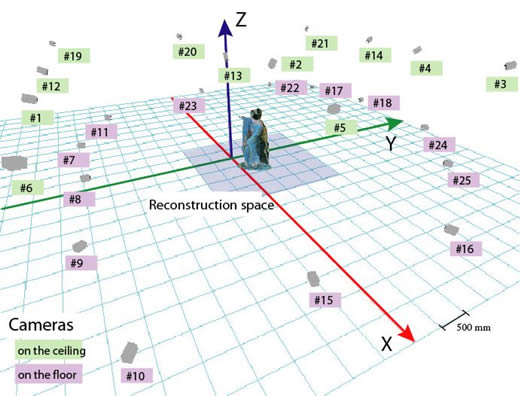

Experimental Results

Future Work

Further Complex lighting environment

Configuration of the reference spheres ...

参考文献

Difference Sphere: An Approach to Near Light Source Estimation

Difference Sphere: An Approach to Near Light Source Estimation

Takeshi Takai, Koichiro Niinuma, Atsuto Maki, and Takashi Matsuyama, IEEE Computer Society Conference on Computer Vision and Pattern Recognition, pp. I-98 - I-105, 2004 High Fidelity and Versatile Visualization of 3D Video

High Fidelity and Versatile Visualization of 3D Video

Takeshi Takai, Doctoral Dissertation, 2005.3